RISE: Randomized Input Sampling for Explanation of Black-box Models

Boston University

Abstract

Deep neural networks are increasingly being used to automate data analysis and decision making, yet their decision process remains largely unclear and difficult to explain to end users. In this paper, we address the problem of Explainable AI for deep neural networks that take images as input and output a class probability. We propose an approach called RISE that generates an importance map indicating how salient each pixel is for the model's prediction. In contrast to white-box approaches that estimate pixel importance using gradients or other internal network state, RISE works on black-box models. It estimates importance empirically by probing the model with randomly masked versions of the input image and obtaining the corresponding outputs. We compare our approach to state-of-the-art importance extraction methods using both an automatic deletion/insertion metric and a pointing metric based on human-annotated object segments. Extensive experiments on several benchmark datasets show that our approach matches or exceeds the performance of other methods, including white-box approaches.

Method

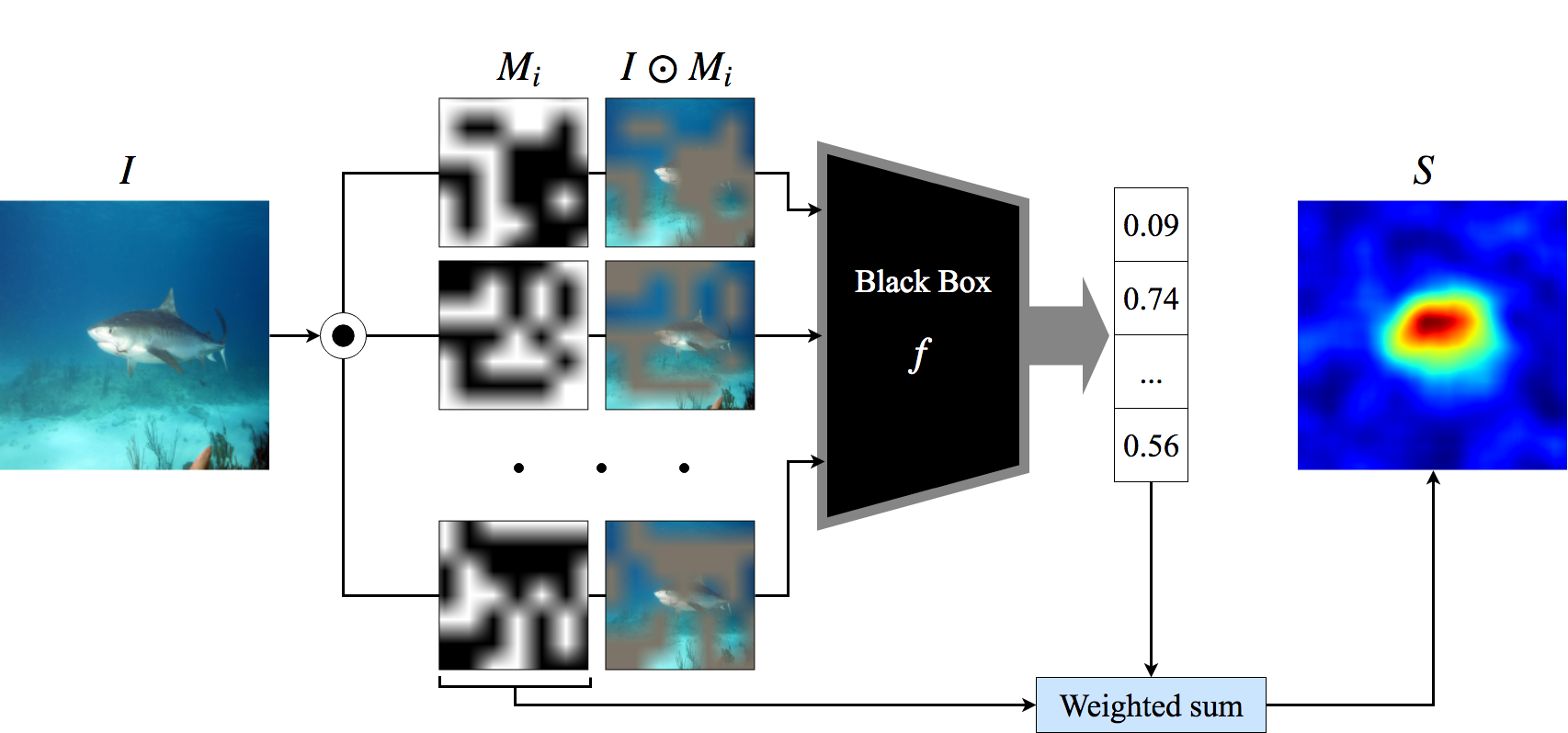

RISE estimates pixel importance empirically by sampling random binary masks, applying them to the input image, and recording the model's output for each masked version. The final saliency map is computed as a weighted sum of the masks, where the weights are the model's output scores.

Formally, given a black-box classifier f and an input image I, RISE approximates the importance of each pixel as the expected output over randomly sampled masks conditioned on the pixel being visible:

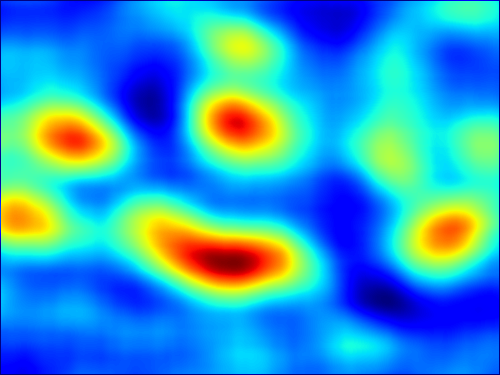

Example saliency maps produced by RISE on ImageNet images. Highlighted regions indicate the most influential pixels for the model's prediction.

Results

We evaluate RISE using the deletion/insertion benchmark and a pointing game on MS COCO. RISE matches or surpasses state-of-the-art white-box methods despite having no access to model internals.

| Method | Type | Deletion ↓ | Insertion ↑ |

|---|---|---|---|

| Gradient | White-box | 0.152 | 0.350 |

| Grad-CAM | White-box | 0.100 | 0.493 |

| LIME | Black-box | 0.115 | 0.422 |

| RISE (Ours) | Black-box | 0.085 | 0.547 |

Presentation

Slides

BibTeX

@inproceedings{Petsiuk2018rise,

author = {Vitali Petsiuk and Abir Das and Kate Saenko},

title = {RISE: Randomized Input Sampling for Explanation of Black-box Models},

booktitle = {British Machine Vision Conference (BMVC)},

year = {2018},

url = {https://arxiv.org/abs/1806.07421}

}